FinBERT Research Project

Period: 06/2022 - 08/2022

Project Name: Evaluation of FinBERT Performance on Multi-Class ESG Classification Task based on the MSCI Framework

GitHub: Go to GitHub

Machine Learning Data Collection Data Preprocessing Python Tensorflow PyTorch Keras Naïve Bayes Logistic Regression SVM Random Forest MLP CNN LSTM Bi-LSTM GRU BERT FinBERT ESG

What's the Project For?

- Project of Undergraduate Research Opportunities Program (UROP) in HKUST - Deep Learning in NLP.

- Evaluate the performance of FinBERT, the Large Language Model that adapts to the financial domain, on multi-class classification of ESG categories based on the MSCI framework.

Responsibilities

- Performed data collection and data preprocessing for machine learning by executing ESG labeling.

- Fine-tuned 11 machine learning models for performance evaluation using Tensorflow and PyTorch, which include:

- Naïve Bayes

- Logistic Regression

- Linear Support Vector Machine (SVM)

- Random Forest

- Multi-Layer Perceptron (MLP)

- Convolutional Neural Network (CNN)

- Long Short-Term Memory (LSTM)

- Bi-directional Long Short-Term Memory (Bi-LSTM)

- Gated Recurrent Unit (GRU)

- Bidirectional Encoder Representations from Transformers (BERT)

- FinBERT - A Large Language Model (LLM) newly developed for financial context

Most Challenging Part of the Project?

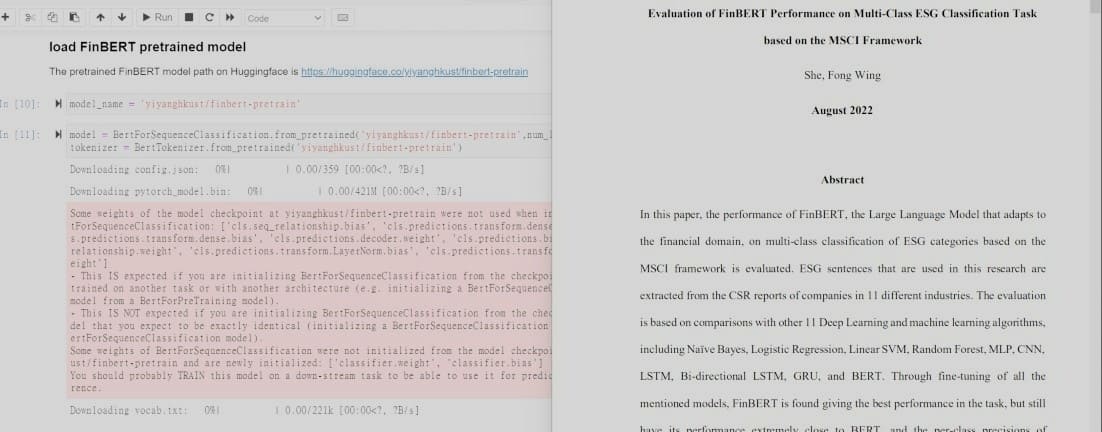

The most challenging part is to prepare the code for BERT fine-tuning. It is because I have never learnt about BERT model before, and I have never used PyTorch before too, it had taken me some time to understand the process of loading the model and tokenizer, as well as defining training arguments for fine-tuning.

The problem was solved unexpectedly easy because supervisor had provided a sample code of fine-tuning FinBERT model, and in the end, only a few amendment in the sample code is needed for fine-tuning BERT. Although the solution was simple, but it has led me to do a lot of research on the BERT model, and gain some basic knowledge about it. It was a rewarding learning process and I enjoyed a lot!